Addressing bias in image-generating AI through ethics-driven engineering and empirical validation.

Overview

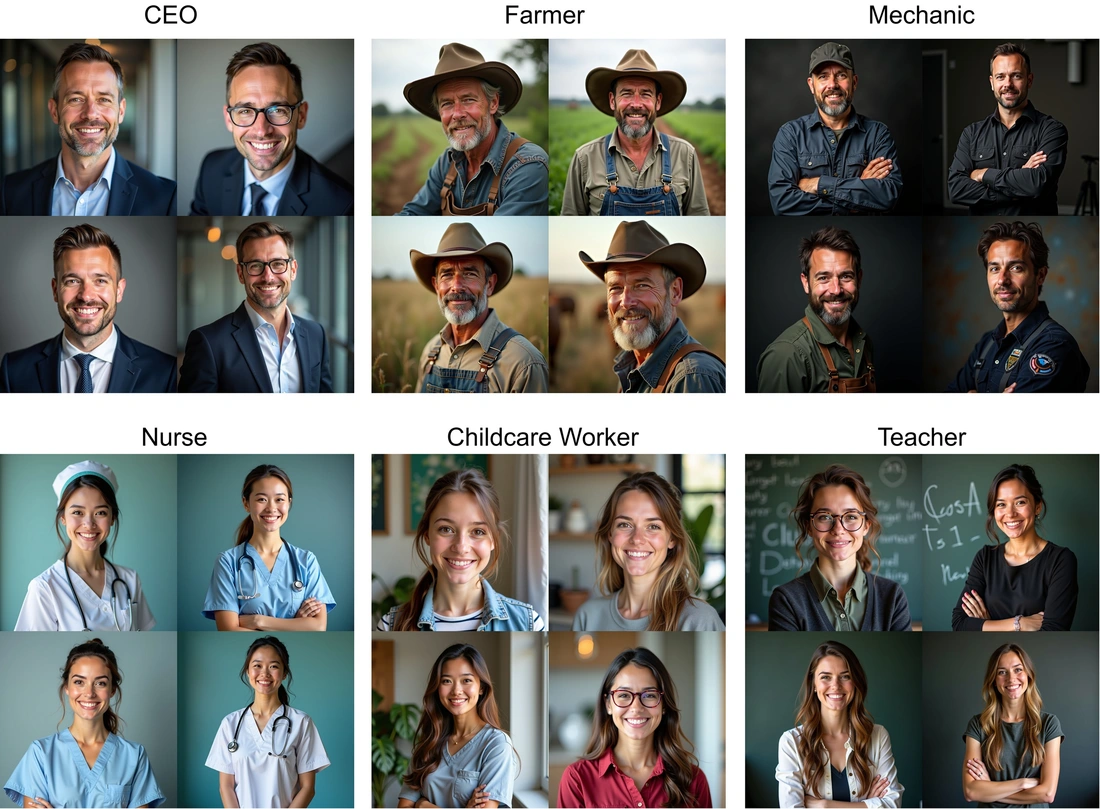

Text-to-image generation models are widely used across various industries, including mobility and safety-critical systems, but they often reflect bias. The EvEn FAIr project develops an open, standards-aligned framework to evaluate and mitigate bias in generative AI, with a focus on fairness in image generation.

The project combines empirical ethics and AI engineering. One research stream investigates how users perceive fairness and which biases they consider unacceptable. These insights are translated into technical requirements that guide the development of fairness-aware AI systems. Another stream focuses on implementing and validating methods, such as causal fairness techniques and feedback loops, to evaluate and reduce bias in both discriminative and generative models.

Through this interdisciplinary approach, EvEn FAIr contributes to the development of transparent and trustworthy AI, supporting future certification under frameworks such as the EU AI Act and relevant DIN standards.

Project team

The CVIMS - Computer Vision for Intelligent Mobility Systems specializes in image understanding algorithms and the generation of synthetic image data using generative models across various modalities. These competencies form the technical foundation of the EvEn FAIr project, which brings together experts in ethics and AI to address fairness in generative systems. EvEn FAIr also has the potential to establish methodological frameworks for other CVIMS projects.

Even FAIr is a collaborative initiative between Technische Hochschule Ingolstadt (THI) and TechHub by efs. The project brings together an interdisciplinary team of experts, including:

- Prof. Dr. Torsten Schön (CVIMS, THI) oversees the overall coordination of the project.

- Prof. Dr. Matthias Uhl (University of Hohenheim) as external advisor.

- Dr. Florian Richter (THI), responsible for researching public perceptions of fairness in technical systems.

- Venkatesh Thirugnana Sambandham (Phd student, CVIMS, THI) focuses on developing methods for fairness evaluation and bias mitigation.

- Megan Smith (research assistant, THI) is conducting research on algorithmic fairness in AI images towards the creation of ethical guidelines for text-to-image generators. Assists with planning empirical research and teaching research methods.

- A specialized team from TechHub by efs, working on the evaluation of fairness in discriminative models used for inferring demographic attributes from images, as well as developing mitigation strategies in image generation algorithms.

Together, the team is developing strategies to improve the fairness and inclusivity of AI-generated images.

Methodology for Fairness Evaluation

The EvEn FAIr follows an integrated approach that combines ethical reflection with technical development. One research group explores how people perceive bias and what their expectations of fairness are. This feedback is then used by another group to translate societal values into measurable technical criteria, which are applied to study and mitigate bias during the training of both discriminative and generative AI models.

Key Features of AI Fairness Framework

- Interdisciplinary approach: Combines empirical ethics and technical research to define fairness in AI based on societal values and stakeholder expectations.

- Evaluation of generative AI fairness: Develops the first framework to systematically analyze and quantify fairness in image-generating AI models.

- Integration into model training: Enables feedback-based bias correction during training of both discriminative and generative models.

- Technical guidelines for fair AI: Translate ethical principles into concrete technical criteria to support compliance with the EU AI Act and DIN standards.

Deliverables and Impact

The project will develop new methods to ensure fairness in generative models, bridging these with insights from the evaluation of discriminative models. This will help establish AI systems that are free from prejudice and unfairness.

Results will be integrated into teaching at THI (e.g., in Bachelor's/Master's programs in AI or Computer Science) and shared via open-source packages (e.g., training scripts, data, and model architectures).

Future projects will reuse this foundation for broader research into fairness and ethics..

THI Contact

Prof. Dr. Torsten Schön

Phone: +49 841 9348-2335

Room: K201

E-Mail: Torsten.Schoen@thi.de

Venkatesh Thirugnana Sambandham

Phone: +49 841 9348-6535

Room: K203

E-Mail: Venkatesh.ThirugnanaSambandham@thi.de

![[Translate to English:] Logo Akkreditierungsrat: Systemakkreditiert](/fileadmin/_processed_/2/8/csm_AR-Siegel_Systemakkreditierung_bc4ea3377d.webp)