Research Areas

What we usually don’t even think of as tasks – hearing someone speak, pronouncing words and sentences ourselves, understanding written text and putting our thoughts into words – are actually complex processes. This becomes obvious when you try to replicate them using technology. And this is precisely the area our team focuses on. We are working on a variety of topics:

- Multilingual Speech and Text Understanding

- Speech and Speaker Recognition

- Large Language Models

- Topic Segmentation

- Machine Translation

- Knowledge Graph (Learning)

- Recommendation Systems

- Synthetic Data Generation

An if you are interested in working with us – don’t hesitate to contact our department. We are always looking for graduate students to join our projects, share their theses and find new and exciting ways of collaboration!

Ongoing Projects

Derivation of AI-based learning paths for students

Development of an AI tutor for an innovative learning experience platform

Digitalization of the customer interface in the local public transport

Large Language Models and Software Development

Interdisciplinary dialogue, extraordinary events and innovative ideas for sustainable regional development.

Interdisciplinary approach to applying AI in teaching and preparing students for an international AI working environment.

A AI-based system is designed to keep outdated programming languages usable, migrate programs, and improve itself through company-specific data.

Cooperation project with KU, Brigk and IFG with a focus on MINT education

THI Videocommunity offers tools, environment and guidance for creating educational videos

This project explores the capability of Large Language Models (LLMs) to understand and apply traffic regulations effectively.

AI-Mirror project aims to develop and perfect the real-time face replacement technologies

HPC offers a flexible and effective parallel processing platform

Completed Projects

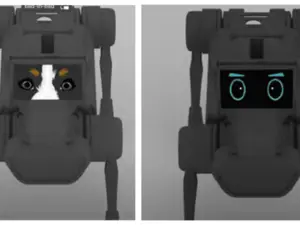

Entwicklung eines empathischen Roboters

Multi-person VR for the innovative learning and working of tomorrow.

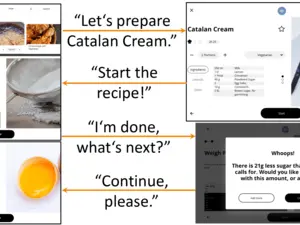

UX-Design Master Project about multi-modal interaction with a smart kitchen device

2024

Autoren: Ankit Kumar and Munir Georges

Link: https://link.springer.com/chapter/10.1007/978-3-031-70566-3_24

Abstract:

Intent detection, a crucial task in spoken language understanding (SLU) systems, often faces challenges due to the requirement for extensive labeled training data. However, the process of collecting such data is both resource-intensive and time-consuming. To mitigate these challenges, leveraging Semi-Supervised Generative Adversarial Networks (SS-GANs) presents a promising strategy. By employing SS-GANs, it becomes possible to fine-tune pre-trained transformer models like BERT using unlabeled data, thereby improving intent detection performance without the need for extensive labeled datasets. This article introduces a novel approach called Joint-Average Mean and Variance Feature Matching GAN (JAMVFM-GAN) with the additional objective to improve SS-GAN learning. By incorporating information about both mean and variance during latent feature learning, JAMVFM-GAN aims to more accurately capture the underlying data manifold. Except JAMVFM, we proposed an additional loss function for discriminator to enhance its discriminative capabilities. Experimental results demonstrate that JAMVFM-GAN along with additional objective function outperforms traditional SS-GAN in Intent Detection tasks. The results indicate the maximum relative improvement of 3.84%, 3.85%, and 1.04% over the baseline on the ATIS, SLURP, and SNIPS datasets, respectively.

Autoren: Gokul Srinivasagan and Munir Georges

Link: https://link.springer.com/chapter/10.1007/978-3-031-70566-3_1

Abstract:

RAG-based models have gained significant attention in recent times mainly due to their ability to address some of the key challenges like mitigation hallucination, incorporation of knowledge from external sources and traceability in the reasoning process. While numerous works in the textual domain leverage additional knowledge to enhance performance, the adaptability of RAG-based models in the speech domain remains largely unexplored. This approach is particularly well-suited for transport applications, where there is a constant change in the schedule and the model needs to be aware of these changes to provide updated information to users. The datasets for such tasks are lacking, and the applicability of language models in the transport domain remains underexplored. In this work, we try to address these problems by exploiting pretrained large language models to generate a synthetic dataset for transport applications. We also utilize the pretrained language models to evaluate the performance of our cascaded RAG system. The experimental results revealed that our approach is less prone to hallucination and can generate grammatically correct responses to user queries.

Authors: Steffen Freisinger, Fabian Schneider, Aaricia Herygers, Munir Georges, Tobias Bocklet, Korbinian Riedhammer

Link: https://www.isca-speech.org/archive/slate_2023/freisinger23_slate.html

Abstract:

The current shift from in-person to online education, e.g., through video lectures, requires novel techniques for quickly searching for and navigating through media content. At this point, an automatic segmentation of the videos into thematically coherent units can be beneficial. Like in a book, the topics in an educational video are often structured hierarchically. There are larger topics, which in turn are divided into different subtopics. We thus propose a metric that considers the hierarchical levels in the reference segmentation when evaluating segmentation algorithms. In addition, we propose a multilingual, unsupervised topic segmentation approach and evaluate it on three datasets with English, Portuguese and German lecture videos. We achieve WindowDiff scores of up to 0.373 and show the usefulness of our hierarchical metric.

2023

Authors: Glocker, Kevin and Herygers, Aaricia and Georges, Munir

Link: https://www.isca-speech.org/archive/interspeech_2023/glocker23_interspeech.html

Abstract:

This paper proposes Allophant, a multilingual phoneme recognizer. It requires only a phoneme inventory for cross-lingual transfer to a target language, allowing for low-resource recognition. The architecture combines a compositional phone embedding approach with individually supervised phonetic attribute classifiers in a multi-task architecture. We also introduce Allophoible, an extension of the PHOIBLE database. When combined with a distance based mapping approach for grapheme-to-phoneme outputs, it allows us to train on PHOIBLE inventories directly. By training and evaluating on 34 languages, we found that the addition of multi-task learning improves the model's capability of being applied to unseen phonemes and phoneme inventories. On supervised languages we achieve phoneme error rate improvements of 11 percentage points (pp.) compared to a baseline without multi-task learning. Evaluation of zero-shot transfer on 84 languages yielded a decrease in PER of 2.63 pp. over the baseline.

Authors: Bruno J. Souza, Lucas C. de Assis, Dominik Rößle, Roberto Z. Freire, Daniel Cremers, Torsten Schön, Munir Georges

Link: https://ieeexplore.ieee.org/document/10026940

Abstract:

The use of simulation environments is becoming more significant in the development of autonomous cars, as it allows for the simulation of high-risk situations while also being less expensive. In this paper, we presented a framework that allows the creation of an autonomous vehicle in the AirSim simulation environment and then transporting the simulated movements to the TurtleBot. To maintain the car in the correct direction, computer vision techniques such as object detection and lane detection were assumed. The vehicle's speed and steering are both determined by Proportional-Integral-Derivative (PID) controllers. A virtual personal assistant was developed employing natural language processing to allow the user to interact with the environment, providing movement instructions related to the vehicle's direction. Additionally, the conversion of the simulator movements for a robot was implemented to test the proposed system in a practical experiment. A comparison between the real position of the robot and the position of the vehicle in the simulated environment was considered in this study to evaluate the performance of the algorithms.

Authors: Liu Chen, Michael Deisher, Munir Georges

Link: https://doi.org/10.1109/ICASSP49357.2023.10096121

Abstract:

This paper describes an end-to-end (E2E) neural architecture for the audio rendering of small portions of display content on low resource personal computing devices. It is intended to address the problem of accessibility for vision-impaired or vision-distracted users at the hardware level. Neural image-to-text (ITT) and text-to-speech (TTS) approaches are reviewed and a new technique is introduced to efficiently integrate them in a way that is both efficient and back-propagate-able, leading to a non-autoregressive E2E image-to-speech (ITS) neural network that is efficient and trainable. Experimental results are presented showing that, compared with the non-E2E approach, the proposed E2E system is 29% faster and uses 19% fewer parameters with a 2% reduction in phone accuracy. A future direction to address accuracy is presented.

Authors: Panagiotis Pagonis, Kai Hartung, Di Wu, Munir Georges, Sören Gröttrup

Link: https://oa.tib.eu/renate/server/api/core/bitstreams/9a028a47-5c64-4794-8dd7-c9582d4b9d35/content

Abstract:

Knowledge Tracing (KT) aims to predict the future performance of students by tracking the development of their knowledge states. Despite all the recent progress made in this field, the application of KT models in education systems is still restricted from the data perspectives: 1) limited access to real life data due to data protection concerns, 2) lack of diversity in public datasets, 3) noises in benchmark datasets such as duplicate records. To resolve these problems, we simulated student data with three statistical strategies based on public datasets and tested their performance on two KT baselines. While we observe only minor performance improvement with additional synthetic data, our work shows that using only synthetic data for training can lead to similar performance as real data.

Authors: Aaricia Herygers, Vass Verkhodanova, Matt Coler, Odette Scharenborg, Munir Georges

Link: https://www.essv.de/paper.php?id=1186

Abstract:

Research has shown that automatic speech recognition (ASR) systems exhibit biases against different speaker groups, e.g., based on age or gender. This paper presents an investigation into bias in recent Flemish ASR. Seeing as Belgian Dutch, which is also known as Flemish, is often not included in Dutch ASR systems, a state-of-the-art ASR system for Dutch is trained using the Netherlandic Dutch data from the Spoken Dutch Corpus. Using the Flemish data from the JASMIN-CGN corpus, word error rates for various regional variants of Flemish are then compared. In addition, the most misrecognized phonemes are compared across speaker groups. The evaluation confirms a bias against speakers from West Flanders and Limburg, as well as against children, male speakers, and non-native speakers.

Authors: Kai Hartung, Aaricia Herygers, Shubham Vijay Kurlekar, Khabbab Zakaria, Taylan Volkan, Sören Gröttrup, Munir Georges

Link: https://link.springer.com/chapter/10.1007/978-3-031-40498-6_8

Abstract:

Biases induced to text by generative models have become an increasingly large topic in recent years. In this paper we explore how machine translation might introduce a bias in sentiments as classified by sentiment analysis models. For this, we compare three open access machine translation models for five different languages on two parallel corpora to test if the translation process causes a shift in sentiment classes recognized in the texts. Though our statistic test indicate shifts in the label probability distributions, we find none that appears consistent enough to assume a bias induced by the translation process.

Authors: Thomas Ranzenberger, Tobias Bocklet, Steffen Freisinger, Lia Frischholz, Munir Georges, Kevin Glocker, Aaricia Herygers, René Peinl, Korbinian Riedhammer, Fabian Schneider, Christopher Simic, Khabbab Zakaria

Link: https://www.essv.de/paper.php?id=1188

Abstract:

The usage of e-learning platforms, online lectures and online meetings for academic teaching increased during the Covid-19 pandemic. Lecturers created video lectures, screencasts, or audio podcasts for online learning. The Hochschul-Assistenz-System (HAnS) is a learning experience platform that uses machine learning (ML) methods to support students and lecturers in the online learning and teaching processes. HAnS is being developed in multiple iterations as an agile open-source collaborative project supported by multiple universities and partners. This paper presents the current state of the development of HAnS on German video lectures.

Authors: Steffen Freisinger, Fabian Schneider, Aaricia Herygers, Munir Georges, Tobias Bocklet, Korbinian Riedhammer

Link: https://www.isca-speech.org/archive/slate_2023/freisinger23_slate.html

Abstract:

The current shift from in-person to online education, e.g., through video lectures, requires novel techniques for quickly searching for and navigating through media content. At this point, an automatic segmentation of the videos into thematically coherent units can be beneficial. Like in a book, the topics in an educational video are often structured hierarchically. There are larger topics, which in turn are divided into different subtopics. We thus propose a metric that considers the hierarchical levels in the reference segmentation when evaluating segmentation algorithms. In addition, we propose a multilingual, unsupervised topic segmentation approach and evaluate it on three datasets with English, Portuguese and German lecture videos. We achieve WindowDiff scores of up to 0.373 and show the usefulness of our hierarchical metric.

2022

Authors: Kai Hartung, Gerhard Jäger, Sören Gröttrup, Munir Georges

Link: https://aclanthology.org/2022.sigtyp-1.3/

Abstract:

In this study we address the question to what extent syntactic word-order traits of different languages have evolved under correlation and whether such dependencies can be found universally across all languages or restricted to specific language families. To do so, we use logistic Brownian Motion under a Bayesian framework to model the trait evolution for 768 languages from 34 language families. We test for trait correlations both in single families and universally over all families. Separate models reveal no universal correlation patterns and Bayes Factor analysis of models over all covered families also strongly indicate lineage specific correlation patters instead of universal dependencies.

Authors: Kevin Glocker, Munir Georges

Link: https://aclanthology.org/2022.icnlsp-1.21

Abstract:

This paper proposes a method for multilingual phoneme recognition in unseen, low resource languages. We propose a novel hierarchical multi-task classifier built on a hybrid convolution-transformer acoustic architecture where articulatory attribute and phoneme classifiers are optimized jointly. The model was evaluated on a subset of 24 languages from the Mozilla Common Voice corpus. We found that when using regular multi-task learning, negative transfer effects occurred between attribute and phoneme classifiers. They were reduced by the hierarchical architecture. When evaluating zero-shot crosslingual transfer on a data set with 95 languages, our hierarchical multi-task classifier achieves an absolute PER improvement of 2.78% compared to a phoneme-only baseline.

2021

Authors: Caroline Kendrick, Mariano Frohnmaier, Munir Georges

Link: https://aclanthology.org/2021.icnlsp-1.30

Abstract:

An important degree of accessibility, novelty, and ease of use is added to smart kitchen devices with the integration of multimodal interactions. We present the design and prototype implementation for one such interaction: guided cooking with a smart food processor, utilizing both voice and touch interface. The prototype’s design is based on user research. A new speech corpus consisting of 2,793 user queries related to the guided cooking scenario was created. This annotated data set was used to train and test the neural-network-based natural language understanding (NLU) component. Our evaluation of this new in-domain NLU data set resulted in an intent detection accuracy of 97% with high reliability when tested. Our data and prototype (VoiceCookingAssistant, 2021) are open-sourced to enable further research in audio-visual interaction within the smart kitchen context.

The "Open Research Space" (ORS) was established in the summer semester of 2022 to bring together students interested in AI and to promote scientific exchange between students among themselves and, above all, involving professors as well.

The ORS offers space for all topics and activities that students and employees are interested in:

- Tours of THI laboratories and facilities

- Initial vague ideas, up to

- App prototypes, but also

- Seminar and final theses or

- Group projects

are presented here. In a cozy atmosphere, the ideas can be discussed and further developed in the presence of academic staff and professors. In addition to THI internal content, the ORS format also offers the opportunity to invite external interested parties. In this way, both sides benefit: Students gain an insight into applied (research) questions from the real world and external parties can get to know interested students at an early stage for potential collaboration.

Summer Semester 2025

The Open Research Space will continue in the summer semester of 2025.

Follow us on social media (https://linktr.ee/thi_ors) or on Moodle for more information.

Previous Events and Lectures

- Lab Tour: Aviation Lab & Tour to brigk

- Tour: Center of Entrepreneurship

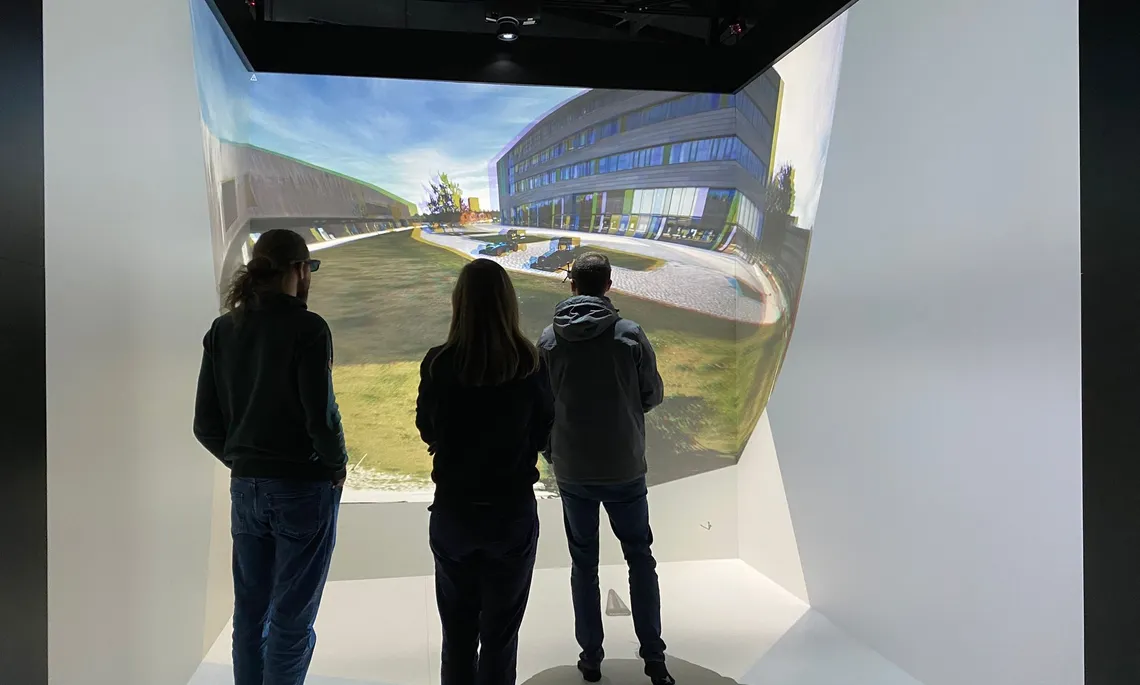

- Lab Tour: VR Lab

- Lab Tour: Acoustics Lab

- Student Presentation: AI Based Identification and Relevance Classification of Company Names in News Articles + Discussion: ChatGPT

- Lab Tour: Aviation Lab

- Workshop: Build your own website with Github Pages

- Student Presentation: Petly

- AI Community Presentation: Federated Learning + Student Presentation: Machine Learning Credit Analysis

- AI Community Presentations: LAIKA + Using Machine Learning to Fight Misinformation

- Workshop: Introduction to Phonetics for AI Students

- AI Community Presentation: Social Media Data Analysis

- Lab Tour: Car2X Lab

- AI Community Presentation: Renumics

- Paper Discussion: Trustworthy AI

- Paper Discussion: Attention is all you need

- Workshop: How to create a good CV?

- ORS Introduction + HAnS

- Introduction + Presentation: Bias in Flemish Speech Recognition

- Lab Tour: Aviation Lab + Presentation: Computer-assisted Pronunciation Training for Mandarin

- Presentation: Acceptance of AI

- Workshop: Introduction to Phonetics for AI Students

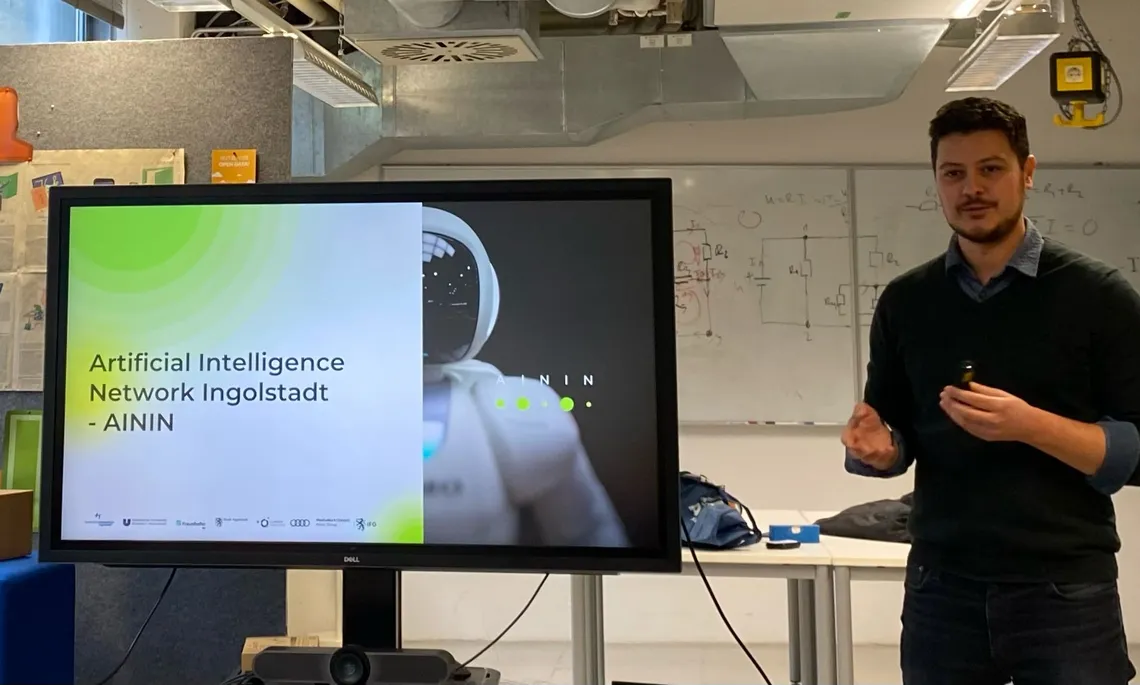

- AI Community Presentation: AININ

- Lab Tour: VR Lab

- Presentation: Center of Entrepreneurship + Student Presentation: Social Media Web App + Student Presentation: UX Design

- Junior/PhD Panel Session

- Senior/Professor Panel Session

- Lab Tour: Cybersecurity Lab

- AI Community Presentation: Role of AI in Interpreting

- Open Discussion Round

- Victor Hug: "Vergleich von Features für MER (Music Emotion Recognition)"

- Noah Dick: "Emotionsextraktion aus Gehirnaktivitätsvoxeln"

- Phillip Weinmann: "Laser mit Sprachsteuerung"

- Taylan Volkan: "Sentiment Bias in Machine Translation"

- UXD Masterstudierende: ENAMOUR Projekt

- Kai Hartung: "Statistische Methode zur Untersuchung von Korrelationen in der Evolution von Sprachen"

- Cedric Friedrichs: "Automatisierte Extraktion strukturierter Daten aus Informationssicherheits-Audits"

- Moritz Kastler: "Transferaufgabe von emotionaler Gesichtserkennung"

- Aaricia Herygers: "Spraakherkenning, wa is da? — Bias in Flemish Speech Recognition"

- Kevin Glocker: "Unsupervised End-to-End Computer-Assisted Pronunciation Training for Mandarin"

- Aaricia Herygers: "Introduction to Phonetics for AI Students"

Impressions

ENAMOUR Projekt Presentation

Center of Entrepreneurship Introduction

FAQs about participating in ORS

Q: Who is it suitable for?

A: For anyone who is interested in open exchange and wants to develop further.

Q: What prerequisites do I need to participate?

A: Absolutely none! Everyone is welcome, whether you are in your first semester or your last, whether you can program or not, whether you engage with these topics in your free time or not. Your opinions and ideas are important to us all, let us share them!

Q: Do I have to work on my own projects or research?

A: No! You are welcome to present your own projects, but you don’t have to. You can also just sit comfortably on our couch and participate.

Q: Do I have to attend regularly?

A: Absolutely not! You can come and go as it suits you best. If you don’t have time or feel like coming, you don’t need to notify anyone.

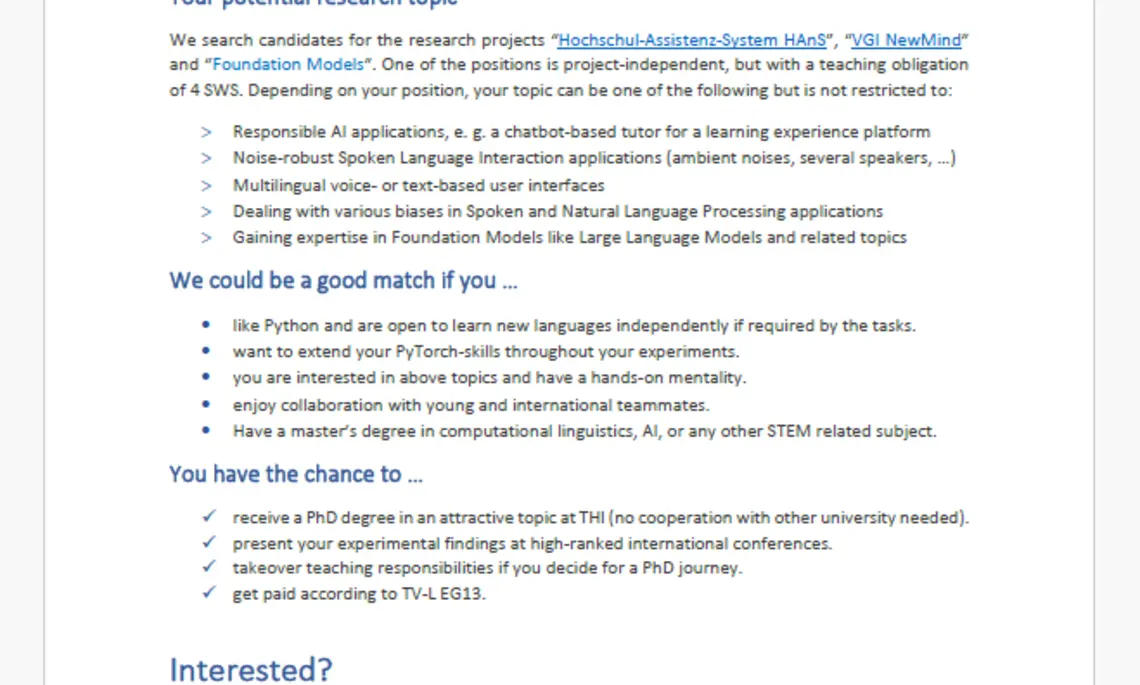

Open Thesis

Regardless of your career choice, bachelor's and master's theses are important projects that allow you to demonstrate your skills and research competencies. Our department offers numerous opportunities and we are always happy to welcome students who are interested in working with us!

Here are just a few examples of topics you could tackle in our department:

- "Low Power AI": The energy consumption for training and inference of a Large Language Model (LLM) and models for Automatic Speech Recognition (ASR) is very high. The subject of the research is the implementation and comparison of various methods and techniques to reduce energy consumption in the application field of speech and text understanding. Possible approaches are to be discussed in the area of knowledge distillation, factorization, quantization or neuromorphic computing.

- "Chatbots": Many chatbot applications in the commercial environment require a "task-oriented dialogue", e.g. when reserving hotels, transferring money or reporting claims to the insurer. These dialogues are not very natural language based in the state of the art. The subject of the research is the implementation and comparison of various methods and techniques that enable natural language interaction and also take real-time information into account, e.g. recommendations for events in the region. Possible approaches are to be discussed in combination with a Large Language Model (LLM).

- "Automatic Legal Text Processing": The automatic processing of legal texts is a challenge. The texts are semi-structured, linked in many ways and sometimes very extensive. The subject of the research is the implementation and comparison of various methods and techniques that enable the robust and comprehensible processing of legal texts. Possible approaches are to be discussed in the areas of statistical parsing, knowledge graphing and large language model (LLM).

- "genAI for Code": With generative AI, programming has changed significantly and will change even further in the coming years. Writing the source code is giving way to a description in natural language or in graphical form. Checking source code is becoming more important, which is made very difficult by numerous security additions. The subject of the research is the generation of source code and information that facilitates checking.

If you are interested in working in our department, suggesting your own topic or simply want to find out more, please contact Professor Georges at Munir.Georges@thi.de

The resulting software and results will be part of an open source solution, which will also be the basis for future scientific articles.

Please note that no work with a confidentiality notice will be supervised.

![[Translate to English:] Logo Akkreditierungsrat: Systemakkreditiert](/fileadmin/_processed_/2/8/csm_AR-Siegel_Systemakkreditierung_bc4ea3377d.webp)